In 2023, AI-generated video of Will Smith eating spaghetti became an internet meme for all the wrong reasons. The clip was a surreal mess of morphing faces and phantom noodles, and it perfectly captured the state of generative AI at the time: impressive in concept, rough in execution, and mostly a curiosity.

By the end of 2025, several AI video models could recreate that same scenario with synchronized audio, accurate facial expressions, and realistic motion. The spaghetti test had become an informal benchmark for the entire industry, and the gap between those two videos tells you everything about the pace of change.

Video comparison via MinChoi on X

That pace of change is exactly why I wanted to step back and look at what shifted in AI over the past year. There's a lot of noise in this space, so the goal here is to ground things in data and firsthand experience: what the numbers show, what it means for businesses and the open web, and where I think things go next.

This is a two-part series. This article covers the major advancements and impacts from 2025. Part 2 follows with my expectations for 2026.

Model and Software Advances

The model race in 2025 came down to competition and cost compression, with reasoning emerging as the year's most important new capability.

The model race

The year started with a surprise. DeepSeek, a Chinese AI lab, released V3 in late December 2024 and R1 in January 2025. R1 was a reasoning model trained via large-scale reinforcement learning without supervised fine-tuning, and its performance approached that of leading Western models at a fraction of the cost. It sent a clear signal that the frontier wasn't going to be defined by a handful of U.S. companies (UNU ).

OpenAI, Anthropic, and Google all shipped multiple flagship releases throughout the year. But the most significant shift was who else showed up. Open-weight models from DeepSeek, Moonshot (Kimi K2), and Mimo reached performance levels competitive with the major proprietary offerings, many of them building on the foundation Meta established by releasing the Llama model family as open weights. The gap that seemed insurmountable in 2023 had narrowed to the point where model choice became less about raw capability and more about cost, context windows, and ecosystem fit.

Artificial Analysis Intelligence Index

| # | Model | Score |

|---|---|---|

| 1 | Gemini 3 Pro | 73 |

| 2 | GPT-5.2 | 73 |

| 3 | Gemini 3 Flash | 71 |

| 4 | Claude Opus 4.5 | 70 |

| 5 | GPT-5.1 | 70 |

| 6 | Kimi K2 | 67 |

| 7 | MiMo V2 Flash | 66 |

| 8 | DeepSeek V3.2 | 66 |

| 9 | Grok 4 | 65 |

| 10 | Claude 4.5 Sonnet | 63 |

| 11 | Qwen3 235B | 57 |

| 12 | DeepSeek R1 | 52 |

Artificial Analysis Intelligence Index

Composite score across 10 benchmarks (0-100 scale). Data from Artificial Analysis , December 2025.

Cost compression

The cost of running frontier-quality AI dropped dramatically in 2025, though not uniformly. GPT-4 class inference that cost roughly $30 per million tokens at launch in early 2023 was available for under $3 per million tokens by mid-2025. Most providers slashed prices aggressively as efficiency improvements compounded, though Google was a notable exception.

| Provider | Early 2025 | Late 2025 | Change |

|---|---|---|---|

| OpenAI | $2.50 | $1.25 | -50% |

| Anthropic | $15.00 | $5.00 | -67% |

| $1.25 | $2.00 | +60% | |

| xAI | $3.00 | $0.20 | -93% |

| DeepSeek | $0.55 | $0.25 | -55% |

Flagship model input cost per 1M tokens (standard context). Sources: provider API pricing pages.

Several forces drove prices down simultaneously. DeepSeek's open-source releases in early 2025 proved that frontier-quality inference was possible at a fraction of the cost, pressuring proprietary providers to respond. Inference optimization techniques like quantization, speculative decoding, and cache improvements further reduced the compute needed per query.

The result was a reinforcing cycle: lower costs drove higher demand, which attracted more competition, which pushed prices down further. Even providers with strong market positions had to cut prices to stay competitive against cheaper open-weight alternatives. That cost compression is one of the key enablers behind everything else in this article.

Three capability leaps

Beyond the model race and cost compression, three capabilities defined what AI could actually do in 2025.

Reasoning

Models learned to think, not just predict

- Multi-step problem solving instead of next-token prediction

- Deeper, more reliable outputs that hold up under scrutiny

- Enabled agentic workflows and advanced coding tools

Video and Audio

From novelty demo to production tool in one year

- Multiple providers shipping 1080p video with synchronized audio

- OpenAI's video app hit #1 on the App Store

- Studios investing billions while AI music and voice tools went mainstream

Agentic AI

AI that takes actions, not just answers questions

- Went from near-zero to peak search interest in 2025

- Coding agents emerged as a major new software category

- Open-source agents gained rapid traction alongside proprietary tools

Gartner estimated that 40% of enterprise applications will include task-specific AI agents by 2026, up from less than 5% in 2025 (Gartner ). Each of these capabilities demands more compute, more memory, and more power, and the infrastructure required to support them became one of 2025's defining stories.

Hardware and Infrastructure

None of this happens without compute, and the infrastructure side had its own major shifts in 2025.

Nvidia's Blackwell GPUs (the specialized chips that power AI workloads) shipped throughout the year, delivering up to 15x faster throughput and 25x lower cost for inference compared to the previous generation. The entire production run was sold out 12 months before units shipped. But Nvidia wasn't just building bigger data center chips. It was also making moves to bring AI processing closer to everyday users.

In September, Nvidia took a $5 billion stake in Intel , roughly 4.4% ownership, alongside a joint development agreement spanning multiple product generations. The deal covers both data center CPUs and consumer hardware, but the consumer side is the more interesting signal: Intel will produce system-on-chips with integrated Nvidia RTX chiplets, putting dedicated AI processing capabilities directly into mainstream PCs.

But the Intel deal wasn't Nvidia's only major hardware move in 2025, or even its largest.

The Groq Acquisition

Nvidia bought Groq for approximately $20 billion in December 2025, bringing their LPU (Language Processing Unit) technology in-house. The LPU takes a fundamentally different approach to inference than traditional GPUs, delivering roughly 10x the throughput while using about 85% less power.

I use Groq hardware to serve the default AI for all three of the tools on this site (Content Decoder, AI Readiness Analyzer, and UX Bench), specifically because of that inference speed advantage for straightforward request-response workloads.

Between the Intel investment and the Groq acquisition, Nvidia ended 2025 with a grip on the full AI compute stack: training GPUs, inference hardware, and a path into the x86 ecosystem that powers most of the world's computers. But the appetite for all that AI hardware created pressure far beyond GPUs.

The memory squeeze

The demand for all this hardware created a downstream crisis that most people outside of tech didn't see coming. A DDR5-6000 32GB memory kit that cost around $100 in 2024 was running over $450 by the end of 2025.

The reason is straightforward: memory manufacturers pivoted their limited production capacity toward the specialized high-bandwidth memory that AI chips require, and consumer devices got squeezed.

The downstream effects extend well beyond AI. Growth in AI compute demand is outpacing Moore's Law by more than 2x. Global data center investment surged over 50% in 2024 alone, and the buildout is expected to continue accelerating through the end of the decade. As a result, hardware producers are delaying new product launches, and consumers buying anything with memory in it, from laptops to servers, are paying more because of competition for the same underlying components.

New memory fabrication capacity takes years to bring online, and demand shows no sign of slowing. Even if manufacturing catches up, it won't be the only problem to solve.

The energy question

Powering this new generation of AI infrastructure is a challenge on its own. U.S. data centers consumed an estimated 183 terawatt-hours of electricity in 2024, over 4% of the country's total. The IEA projects that figure will more than double by 2030, growing roughly 15% per year. AI's share within that demand could climb from roughly 10% today to 35-50% by decade's end. Some regions are already facing seven-year waiting lists for grid connections.

U.S. Data Center Electricity Consumption

Total electricity consumption (terawatt-hours)

Source: Pew Research / IEA

Public awareness tracked the demand curve. Google Trends interest in "data center energy" held flat through most of 2024, then surged nearly 7x over 2025 as utility bills, grid strain, and new facility proposals became local news stories. The tension is real: communities near proposed data center sites are pushing back on noise, water usage, and electricity costs, and this is increasingly becoming a regulatory and policy issue at the state level.

Consumer Adoption and Scale

The raw growth numbers in 2025 were staggering, and they deserve context.

ChatGPT's weekly active user count tells the clearest story. OpenAI reported 50 million weekly users in January 2023. By August of that year, they hit 100 million. The number climbed to 250 million by October 2024. In February 2025, it crossed 400 million. And by September 2025, it reached 800 million weekly active users. That's a 16x increase in under three years, with the steepest acceleration happening in 2025 alone.

ChatGPT Weekly Active Users

Source: OpenAI

Top Gen AI Services (2025)

| # | Service | YoY |

|---|---|---|

| 1 | ChatGPT | — |

| 2 | Claude | +3 |

| 3 | Perplexity | +3 |

| 4 | Gemini | new |

| 5 | Character.AI | -3 |

By DNS volume. Cloudflare Radar 2025

ChatGPT is still the dominant force, but the competitive landscape shifted significantly. Four single-purpose tools (Codeium, Wordtune, Poe, Tabnine) dropped out of the top 10 entirely, replaced by broader platforms like Gemini, Grok, and DeepSeek. The market consolidated around general-purpose AI assistants rather than niche utilities.

What are all those users actually doing? OpenAI published its first large-scale usage study in September 2025, classifying 1.1 million conversations over a year. Writing and practical guidance each accounted for roughly 28% of usage, followed by information-seeking at 21%. Technical help, including coding, made up just 7.5%.

ChatGPT Conversation Topics (1.1M sampled, May 2024 - Jun 2025)

Source: Chatterji et al., "How People Use ChatGPT" (NBER, Sep 2025)

AI meets consumers where they are

That growth played out in the surfaces where consumers already spend time. AI stopped being just a destination you visit and started embedding itself directly into search results, browsers, and operating systems.

Google's AI Overviews rolled out across Search in 2025, reaching 1.5 billion monthly users globally (Search Engine Journal ). AI-generated summaries now often sit above traditional results for a growing share of queries. But the bigger move was "AI Mode," a dedicated conversational interface launched directly within Google Search. That's significant because Search is Google's largest product. Embedding a full AI experience inside it signals that even Google sees the traditional ten blue links as insufficient for how people want to find information.

Then came the browser wars. Google embedded AI directly into Chrome with a sidebar assistant and agentic browsing capabilities. Microsoft launched Copilot Mode in Edge, putting an AI assistant in front of hundreds of millions of existing users.

Meanwhile, a new wave of startups skipped the add-on approach entirely: Perplexity launched its Comet browser with a built-in AI agent, OpenAI released its own Chromium-based browser, and at least three more AI-native browsers launched before year's end. The browser itself became the battleground, not just a place to bolt on AI features.

That expansion comes with tradeoffs. Brave published research showing AI browsers are vulnerable to indirect prompt injection, where malicious content on a webpage can hijack the AI agent to extract personal data without user input. OpenAI later acknowledged that prompt injection, like social engineering, "may never be fully solved."

Consumers adapted fast. Businesses faced an entirely different set of challenges.

Business Adoption and Usage

The enterprise conversation around AI moved past the hype phase in 2025. Early in the year, most organizations were still figuring out where AI fits. By the end of the year, clear patterns had emerged.

In conversations with colleagues throughout the year, there was consistent alignment on three workstreams of enterprise AI adoption:

The experimentation reality check

Microsoft introduced the concept of the "Frontier Firm" in its 2025 Work Trend Index , describing companies that have reorganized around hybrid human-and-agent teams. According to their data, these firms are seeing 3x higher returns than slower adopters, with the average organization deploying AI across seven or more business functions.

Microsoft frames AI integration as progressing through three phases: AI as an assistant (answering questions, generating drafts), AI as a digital colleague (handling defined workflows with some autonomy), and AI as an autonomous process runner with human oversight. Most organizations in 2025 were somewhere between the first and second phase.

That progression raises a question about what roles look like as organizations move further along the curve. When agents handle more structured, repeatable work, the value of employees who primarily execute tasks starts to narrow. The value shifts toward people who can direct agents toward broader objectives and make judgment calls that AI can't. Several teams I spoke with in 2025 were already rethinking job descriptions with this in mind.

of generative AI pilot programs fail to deliver measurable ROI

MIT found the root cause isn't model quality. It's a "learning gap" in enterprise integration: organizations struggling to move from a demo to a system that actually works in their environment (MIT ).

Gartner reinforced this with their prediction that over 40% of agentic AI projects will be abandoned by the end of 2027, citing escalating costs, unclear business value, and what they termed "agent washing" by vendors rebranding existing products. I explored this gap in more detail in From Pilots to Production: most failures trace back to the operating model, not the technology itself.

Where it actually worked: the individual

While enterprise-wide AI projects struggled to scale, the clearest wins in 2025 came back to the individual: people using AI to work faster and think bigger. Anthropic's Economic Index tracked millions of real-world AI conversations and found that 52% of usage was augmentation, AI enhancing work rather than replacing it.

The biggest wins showed up in how individuals approached their daily work. Drafting that used to take a full morning got done in minutes. Research that meant hours of reading condensed into focused summaries. A developer could go from idea to working prototype the same afternoon. None of it was flawless, but it fundamentally changed how fast one person could move.

But those gains weren't spread evenly. Code creation doubled as a share of AI conversations, and mid-to-high wage knowledge workers (developers, analysts, designers) showed the highest usage, while both the lowest-wage and highest-paid roles lagged. AI in 2025 was primarily a tool for the broad middle of the knowledge economy, accelerating work that already required judgment and creativity. With adoption moving this fast across industries, the question of who sets the rules was inevitable.

Regulation and Policy

As AI capabilities accelerated in 2025, governments moved to respond, though not always in the same direction.

The federal pivot

The Biden administration's October 2023 Executive Order on AI Safety established reporting requirements for frontier model developers, directed agencies to develop AI governance frameworks, and created new standards for AI safety testing. It was the broadest federal AI policy to date, but its scope treated AI like a mature industry where outcomes could be predictably managed. The compliance burden disproportionately favored large incumbents with the legal and governance infrastructure already in place, while constraining the rapid iteration that a still-emerging field needed.

The Trump administration took a different approach. After revoking the Biden order in early 2025, the White House issued an Executive Order titled "Removing Barriers to American Leadership in Artificial Intelligence" and a corresponding Action Plan that prioritized acceleration over compliance. Where the Biden framework added requirements that favored companies large enough to absorb them, the new approach aimed to lower barriers for startups and emerging players entering the AI space.

The White House AI Action Plan organized around three pillars:

In practice, the shift moved federal AI policy from "regulate first, innovate within guardrails" to "innovate first, address harms as they emerge," with U.S.-China AI competition as the driving strategic concern. For businesses, the rollback reduced immediate compliance pressure. It remains to be seen whether this approach proves effective. What is clear is that Congress has not stepped in with federal AI standards, and in that absence, states have taken the lead.

State-level legislation

All 50 states introduced AI-related legislation in 2025 (NCSL ). Colorado now requires impact assessments for AI systems used in employment, lending, and insurance. California signed seven AI bills into law covering frontier model safety, deepfake protections, and content disclosure. But introducing bills and passing them are different things: 38 states actually enacted AI laws. Whether these laws produce meaningful outcomes or just compliance overhead remains an open question.

State AI Legislation

Congress hasn't passed AI legislation, and in that vacuum, states are setting their own terms. For companies operating nationally, the result is a growing compliance patchwork that's only likely to get more complex as these laws take effect.

The EU AI Act takes effect

The European Union's AI Act , adopted in 2024, began its phased enforcement in 2025. It started the year narrowly, banning practices like social scoring and manipulative AI, then expanded by late 2025 to cover general-purpose model providers like the companies behind ChatGPT, Gemini, and Claude. The Act classifies AI systems into risk tiers, from minimal risk (no obligations) to unacceptable risk (banned). High-risk systems face mandatory conformity assessments, documentation requirements, and human oversight provisions. Further enforcement is set for 2026, when rules for high-risk AI in areas like hiring and credit scoring take effect alongside new transparency requirements. For companies deploying AI globally, it's setting the de facto international standard, much as GDPR did for data privacy.

Minimal Risk

No obligations

Limited Risk

Chatbots, deepfakes

High Risk

Hiring, credit, law enforcement

Unacceptable

Banned

AI-enabled games

requirements

documentation, human oversight

biometric surveillance

The regulatory landscape heading into 2026 splits along different priorities. The U.S. federal government favors growth and acceleration, while the EU and several U.S. states prioritize predictability and control. Critics of the EU approach see it repeating GDPR's legacy of cookie banners and compliance friction rather than delivering meaningful protection. At the same time, national security leaders worry that overly cautious regulation could cause Western AI to fall behind Chinese competitors that may not face the same constraints.

AI and the Open Web

This is the section that hits closest to my day-to-day work, and it's where 2025's changes felt most personal. Internet traffic grew 19% globally and 28% in the U.S. (Cloudflare Radar ), but who's driving that growth tells a different story.

The changing composition of web traffic

One of the most underreported shifts of 2025 was who is actually visiting websites. Cloudflare's numbers are striking: humans are barely in the majority anymore. Of all HTML requests hitting their network, AI bots account for 4.2%, but they're only part of the equation.

Non-AI bots make up nearly half of all web traffic. The highest-volume verified bots outside of Googlebot and AI crawlers fall into three familiar categories: search engine crawlers, SEO tools, and advertising and marketing platforms. Even outside the AI category, content discovery and monetization continue to drive a significant share of internet activity.

Web Traffic Composition

Analyzing the purpose behind that crawling reveals where things are heading. Of all AI bot activity, training dwarfs everything else, accounting for roughly 80% of crawl volume compared with AI-powered search retrieval, user-requested browsing, or undeclared purposes. The appetite for web data as training fuel shows no signs of slowing. OpenAI's GPTBot, its dedicated training crawler, roughly tripled its share among AI crawlers year over year, making it the single largest AI bot by volume. The race to build the next generation of models is showing up in server logs.

Where consumers are going

The generative AI platforms themselves saw explosive growth. ChatGPT still dominates consumer web visits, but it's gradually losing share as competitors gain ground. Gemini more than doubled between April and September, and by November had reached nearly 15%. Grok grew from essentially zero to 2.5%, largely driven by X/Twitter distribution. DeepSeek's early-year buzz faded steadily (SimilarWeb ).

Gen AI web visit share (Apr-Sep 2025)

Data from SimilarWeb , April-September 2025.

The crawl-to-click imbalance

Consumers are increasingly going to AI platforms, and the scale is hard to overstate. By the end of 2025, ChatGPT ranked as the fifth most-visited website globally, while Gemini climbed from outside the top 50 in March to 16th by year's end (SimilarWeb ). But those platforms are built on content they take from publishers. Cloudflare's data revealed a stark imbalance: for every referral click that OpenAI sends back to a publisher, its crawlers make roughly 1,400 requests to that publisher's site. For Anthropic, the ratio is far more extreme at nearly 71,000 to one.

1,400:1

OpenAI crawl-to-referral ratio

For every referral click sent back to publishers, OpenAI's crawlers make ~1,400 requests

70,900:1

Anthropic crawl-to-referral ratio

ClaudeBot crawls nearly 71,000 pages for every referral click it sends back

Source: Cloudflare - AI Search Crawl-to-Refer Ratio

This created real financial pressure. Many publishers saw meaningful traffic declines as AI platforms absorbed queries that previously drove clicks to their sites. Some responded by signing licensing deals with AI companies, turning the same content being crawled into a new revenue stream. Others pursued legal action. The economics are still shaking out, but the underlying tension is clear: the value exchange between AI platforms and the publishers they depend on hasn't found its equilibrium yet.

But the crawl-to-click imbalance is only half the equation. The same technology that summarizes and surfaces content has also made it dramatically cheaper to produce content at scale. Publishers now compete for shrinking traffic against an ever-growing volume of machine-written pages, while platforms struggle to separate original content from synthetic noise.

The content quality arms race

As AI models train on web content and then generate new content that gets indexed, you get a feedback loop. Model outputs become training inputs for the next generation, dramatically increasing the total volume of content on the web.

AI generates content → Content is ingested → AI trains on that content → Process repeats ↻

Each cycle adds more content, making it harder to distinguish original work from synthetic material at scale.

Google spent 2025 trying to break this cycle across completely different product areas. YouTube, Search spam systems, and core ranking all got distinct responses to the same underlying problem, revealing just how broadly AI-generated content is reshaping the company's business.

YouTube Policy

Required creators to disclose AI-generated or altered content in videos. Targets deepfakes and synthetic media on the platform.

Search Spam Update

Aggressive action against AI-generated spam and manipulative content designed to game search rankings at scale.

GIST Algorithm

Uses Gemini to assess source authority and trustworthiness, shifting from keyword matching to AI-powered credibility signals.

The pattern is telling. A single company is fighting the same problem across video, search quality, and core ranking, each with different tools but the same underlying cause: AI-generated content is growing faster than existing quality signals can filter it. GIST is perhaps the most interesting response because it uses AI itself to assess trustworthiness, effectively deploying AI to police AI.

Publisher pushback

The tension between AI companies and publishers escalated throughout 2025. The New York Times and Chicago Tribune both sued Perplexity for copyright infringement, alleging the company built its business on unauthorized scraping and distribution of their journalism. The Times' separate lawsuit against OpenAI advanced toward trial after a federal judge allowed the core copyright claims to proceed.

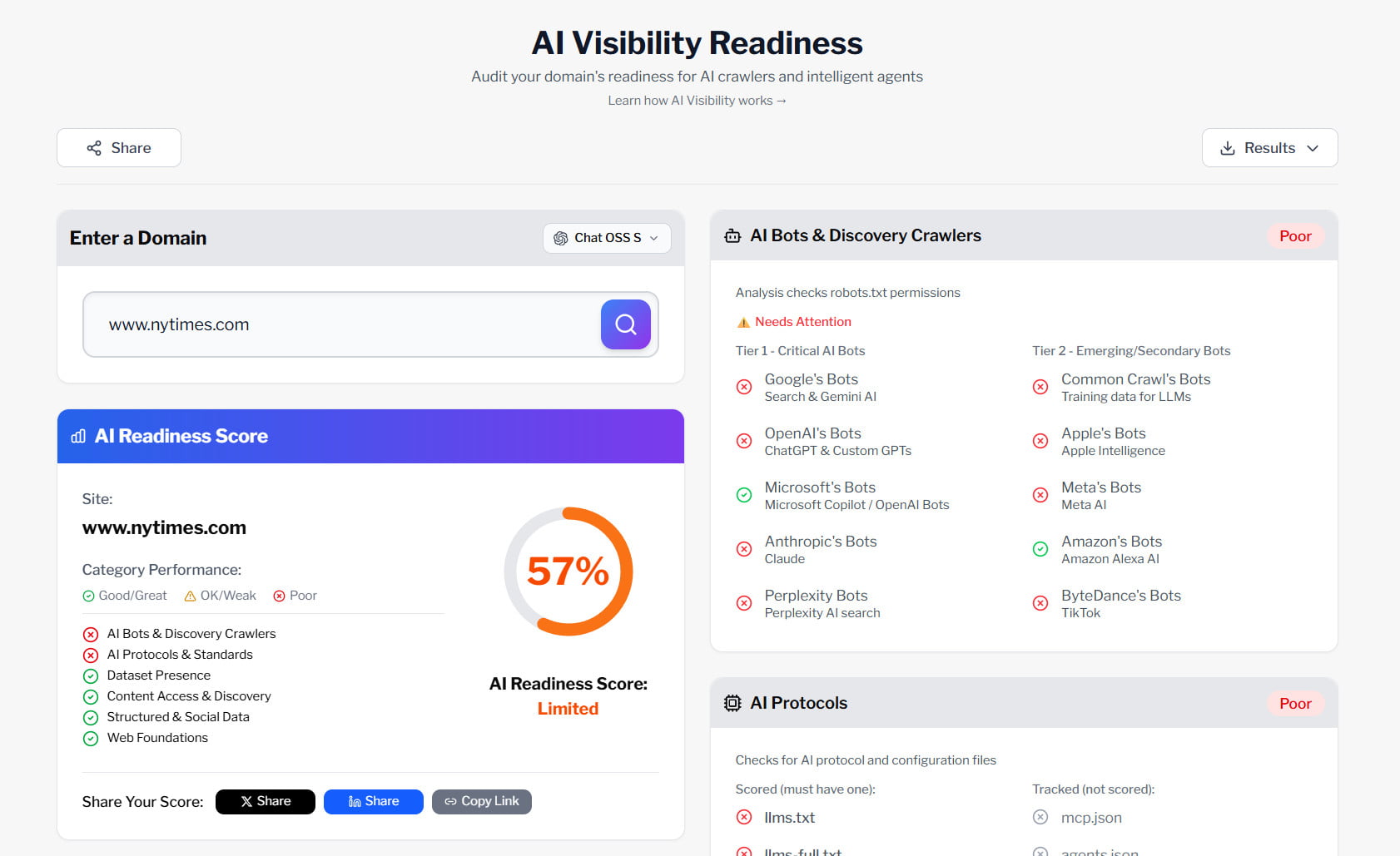

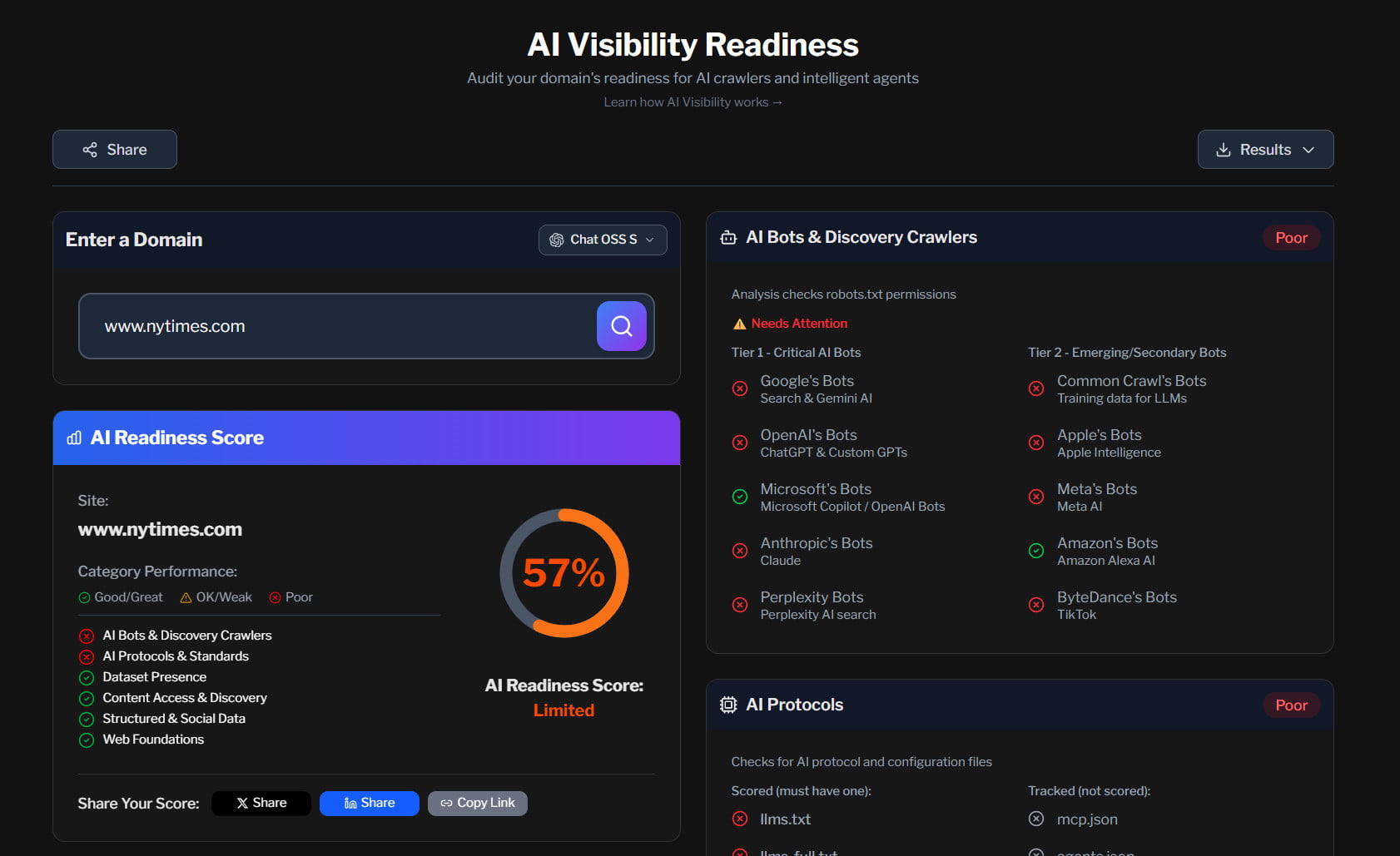

Some publishers took matters into their own hands. NYTimes.com, for example, explicitly blocks over a dozen AI crawlers in its robots.txt file, including GPTBot, ClaudeBot, ChatGPT-User, Google-Extended, PerplexityBot, and Bytespider, among others. It's one of the most aggressive stances in the industry. When I run sites through the AI Readiness Analyzer, this kind of blocking is immediately visible in the crawl accessibility assessment. It's a real-time indicator of how publishers are drawing lines.

Screenshot from AI Readiness Analyzer, a free tool on this site

But robots.txt is a directive, not a barrier. Ignoring it used to be the domain of spammers and scrapers. Now well-funded AI companies are doing the same. Perplexity was caught using IP rotation and user-agent masking to bypass publisher restrictions entirely, forcing the industry to consider whether the enforcement tools built for bad actors need to be applied to mainstream companies too.

The other side: sites working with AI

Not everyone is blocking. A growing number of sites are going the opposite direction, making their content more accessible to language models. The llms.txt standard, a structured file that helps AI systems understand a site's content and purpose, saw its community-submitted directory grow from 112 sites in January 2025 to over 2,000 by January 2026. Actual adoption is likely much higher since most site owners won't submit to a directory.

The growth accelerated sharply in late 2025, nearly tripling in just three months. These aren't just small blogs. The directory includes major developer platforms, SaaS companies, and documentation sites that decided discoverability by AI is worth optimizing for. Our AI Readiness Analyzer checks for llms.txt alongside other AI configuration protocols as part of its discovery assessment.

llms.txt Adoption

Source: llms.txt Directory

The open web is splitting into two camps. One is putting up walls with bot blocks and lawsuits. The other is laying out a welcome mat with structured AI protocols and content optimized for AI discovery. Both responses are rational given the current economics, and the question heading into 2026 is whether the economics shift enough to make cooperation the default. If you're interested in the practical side of navigating these choices, I go deeper in How to Improve AI Visibility.

2025 was the year AI stopped being a novelty and started reshaping how the web works. The changes covered in this article are just the foundation for what I think is coming next.

Where we go from here

That covers the landscape: explosive adoption, enterprise experimentation colliding with organizational reality, a hardware arms race reshaping the supply chain, and an open web caught between AI's appetite for content and publishers exploring how to operate and drive value in this new landscape.

The natural question is where all of this goes next. In Part 2: AI-Driven Shifts for 2026, I share the major shifts I see developing based on these trends and patterns.

Continue to Part 2

AI-Driven Shifts for 2026

Major shifts reshaping business, technology, and the open web in 2026.

Read Part 2